Don't Exclude Rollouts From Your RL Training Runs

I think there is a serious anti-pattern that sometimes shows up in papers doing online reinforcement learning at scale.

DAPO is a paper from ByteDance that proposes something that, on the surface, seems fine and practical; overly long samples during RL are undesirable, so we should mask them out from contributing to the loss.

This is secretly introducing something that you never want to introduce; systematic train/test bias.

In the deployment case, the user of the language model is (typically) not doing filtering or exclusion rules for things orthogonal to the training objective, such as length, repetition, etc. When you selectively reinforce an objective over a subset of the "true distribution" of rollouts, rather than the standard distribution of possible outcomes, you are not directly creating pressure against the thing you don't want.

Most telling is how the verl docs (bless your soul if you're using verl in 2026 btw) describe DAPO:

"Most experiments in the paper, including the best-performant one, are run without Overlong Filtering because it's somehow overlapping with Overlong Reward Shaping"

There's already cargo cult vibes here (the best runs didn't use the mask? why even have the mask then), but what's most alarming to me is the interpretation of overlong filtering as being merely... redundant? "Somehow overlapping".

What that wording obscures to the uninitiated is that, mechanistically, the techniques do not actually overlap at all. In a way that is subtly dangerous if you treat them as functionally equivalent.

Overlong Filtering by itself is something that, maybe, if only inadvertently, leads to selection towards generative subsequence tendencies that are naturally shorter, for some objectives, on some policies. In many cases, it has no guarantees or reason to, because it's not actually controlling the reward such that the policy optimizes for producing shorter rollouts.

Overlong Reward Shaping, on the other hand, is meaningfully distinct, because it changes the reward semantics. A linear ramp rescaling the reward down based on length up to a point creates subtle selection pressure favoring the rollouts that were terse and right as opposed to the ones that were long and right, because the advantages are estimated in accordance to the desired trait, creating pressure for all sampled outcomes to avoid the thing that isn't wanted.

But at inference time, there is no mask or exclusion logic. There's just a distribution of expected outcomes (ideally with 1.0 temperature and no arbitrary top_k/top_p or repetition penalty bandaids... you get the idea). As well as a maximum token length.

A possible steelman can be made: "if you have both overlong masking AND the reward shaping penalty is active, the penalty should do enough work to make the distribution you are training on ~mostly align with the true distribution, making the mask stop triggering later on".

But this steelman is still a steelman of the best case, where the penalty does enough work to make the full sample of outcomes equivalent to the subset with the mask applied (i.e. the RL converges to a point where the masking never triggers). Plausibly, the policy could be stubborn, the penalty scales too weakly to create aggregate selection pressure against the problem, masking distorts hard problems systematically, etc. I could go on and on when it comes to pervasive possible pathologies.

But even worse is that in many public practitioner implementations of DAPO, the reward shaping portion is skipped entirely, meaning my steelman doesn't apply at all, and all of my "the actual evaluation case is systematically worse or undefined" criticisms apply.

Enter Open-R1 from HuggingFace, which should be seen as a cautionary tale. It's an older GRPO work, so methodological bluntness can be excused to some extent, but I imagine that some of that bluntness might not be recognized by practitioners even today.

"Overlong filtering is essential to stability, especially when μ>0"

Hm. I can buy that not penalizing length under a fixed generation length / truncation regime can cause divergences, especially since Open-R1 used vanilla GRPO without the CISPO-style importance sampling corrections that later became popular. This is dangerous for off-policy more generally speaking, but is sort of mitigated by μ=1 being on-policy to begin with (μ>0 is the phrasing used, but μ=0 would just be... not training, so presumably they mean μ>1); but I digress.

"Despite these improvements, we've now hit a recurring new issue: although rewards go up, the downstream evals get worse"

Uh oh.

"this is most likely due to either a train/test or difficulty mismatch"

Uh oh (x2).

Honestly, there are too many confounding variables for me to causally prove that the masking is specifically at fault, but the primary reason I have for my suspicion here is essentially:

- systematic exclusion of longer rollouts has a fairly obvious reason to cause the model to get worse at harder problems; the default expectation of training a reasoning model is "harder problems will have longer reasoning traces".

In this thread (https://huggingface.co/spaces/open-r1/README/discussions/20), the proposed solution to the divergence is higher batch sizes:

"The mystery of the reward / eval mismatch has been solved: as shown below, if we scale up the prompt batch size from 32 -> 128 we see the eval collapse goes away and performance is roughly stable!"

But the graphics from that post seem to tell a different story. A story that doesn't at all look like monotonic stability improvements in proportion to the batch size.

- bigmath bs64: collapse/degradation

- bigmath bs128: didn't collapse (yet)

- aime2024 bs32: collapse/degradation

- aime2024 bs64: didn't collapse (yet)

- aime2024 bs128: on its way to collapse(?)

I feel fairly confident in saying that the bigger problem with the train/test mismatch in this case is that, despite the DAPO length mask being systematically biased, the degree to which it can bias a training run is a deeply convoluted, entangled function of:

- your dataset composition,

- your policy's current length distribution,

- how these variables non-linearly interact over the course of training.

Arguably, this is made even more confusing by the fact that the DAPO masking computes the advantages for the group before masking out the undesirable "overlong" samples. So it's not even a true estimation of the advantages over the subset you are treating as valid: it's biased by including the samples that you are going to exclude later as a part of the baseline, and it's also biased by treating the long sample as something that essentially never happened in spite of it being a part of the baseline.

So it's biased in two distinct ways which can interact with each other in ways that are unpredictable and essentially uninterpretable. Oof.

I could attempt to reason more from first principles about the long term consequences, but at some point, I feel that it should become obvious that this isn't worth defending further because the instinct to do it is simply... wrong.

It's an anti-pattern. Don't do it.

I have my own opinions about how to parsimoniously control for length, but I'm not going to get into those. What I'd be happy with is practitioners doing basically anything but this. Training a better prior policy to begin with (i.e. rejection sampling on traces that are shorter and doing lightweight SFT over those)? Sure. Experimenting with reward shaping or just directly borrowing the Overlong Reward Shaping and using it alone? Sure. Truncating at some arbitrary point (while preserving them as failures, such that the yapping can be deinforced)? Sure. Anything that doesn't systematically bias the baseline by excluding samples that could've been real world outcomes during deployment should be superior.

And more broadly: if your first instinct when you see something undesirable in RL rollouts is to mask it out of the loss at the algorithmic level, you should examine that instinct with extreme prejudice. Masking does not induce pressure. It is the absence of pressure. And as far as the policy is concerned, any masked situation is a situation it doesn't need to avoid.

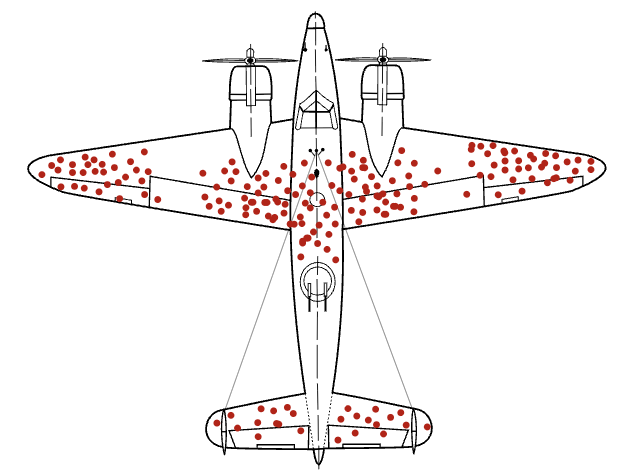

Sound familiar?

I risk being redundant at this point, but if something is undesirable, the correct response is to make your reward signal point in the direction of the desirable thing, not to pretend that the undesirable thing never happens.

The whole point of RL is that the policy learns from the distribution of its own behavior. This necessarily includes the behavior you don't like.

Especially the behavior you don't like.

also it's one letter away from DPO which is already a red flag tbhdesu